SQL Monitoring - 5 steps to Full Control

Monitoring your servers is a natural part of a DBA's responsibilities and essential for delivering reliable and stable services. But are all SQL monitoring tools created equal?

This article concerns the why, what, and how to monitor SQL servers. With this knowledge in hand, you are better equipped to decide what you need from a monitoring tool and how to benefit from it.

The first thing you want to do as a DBA is to see the status of the database instances you manage – and that’s where we start with SQL monitoring. With only a handful of instances, you may be able to do it by hand, but once the numbers start increasing, you will need some kind of tool or solution to help you. And that’s where you face the first question – what monitoring tool should I use?

There are many SQL monitoring solutions to choose from, or you can roll your own – so let´s talk about what is important and why when it comes to monitoring SQL servers.

First, why do you want to monitor all those SQL instances? It may sound like a simple question, but let's look closer at some reasons for monitoring instances:

It may be a simple question, but there is a lot you can monitor:

Monitoring collects data. This needs to be stored to be used later for analyzing trends or reporting.

The more data you collect, and the more frequently you collect it, the more space you will need to store the data. So consider the following:

Monitoring should be done continuously as long as the server is in operation.

Some parameters, like CPU load, should be monitored very frequently, like every second, to provide a good and detailed profile of the load on the server.

Other parameters, like disk space, can be monitored much less frequently.

For each parameter, we need to find the optimum frequency. Too frequently will produce a load on the monitored system and generate too much data to be stored. If more often is needed, data will be detailed enough to let you analyze and find the cause of a given problem.

It is a question of balancing resource usage vs. usefulness.

Monitoring solutions can collect a lot of data. More data leaves you overwhelmed, and looking for that needle of useful information in the haystack of raw data can be frustrating. Too little information can lead to an inability to locate issues or reach incorrect conclusions, which can be very costly.

If you collect too much data, you will run into two other problems as well:

Automation can scan through all that data and notify you only when something is out of the normal and needs your attention.

You will then need to turn the raw data into useful information that gives you insight into what's going on and what you can do about it.

What's important is how you present the data and how you can turn the data into useful, actionable information.

Good monitoring tools should be able to automate most, if not all, of this for you.

This may not be obvious, but monitoring can be a rather time- and resource-consuming exercise.

Consider the following:

For large estates, you have a few more things to consider:

You can perform monitoring on many levels, from the basic manual methods to fully automated enterprise-class monitoring and integrated management solutions.

For this level, almost anything will work. Simple scripts, any infrastructure monitoring platform, and even manual monitoring can work.

For this level, simple monitoring solutions with checks and alerts can function. We also see many roll-your-own scripts being used here.

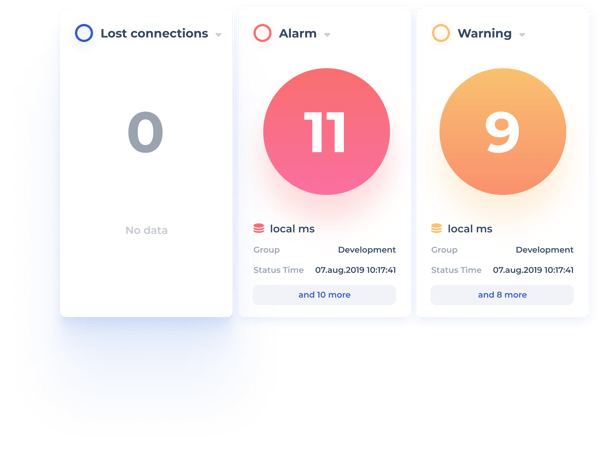

Here things get more complicated. A lot more parameters are checked and checked more frequently. Values are compared to thresholds and alerts or warnings generated when required. This can quickly become too complicated for roll-your-own solutions, and manual monitoring is no longer realistic.

If you are aiming for preventive action, you should also consider a robust maintenance solution. Rather than wait for an alert that suggests you take action – be proactive and run some good maintenance scripts.

So for level 3 and up, you are most likely looking at a 3rd party monitoring/management solution.

Many DBAs are familiar with the scripts done by the well-known Swedish DBA Ola Hallengren. Many considered the "gold standard" for SQL Server maintenance scripts. https://ola.hallengren.com/. But even that can be improved, especially for larger sites where full-scale deployment can be a big challenge.

No article on monitoring can be completed without mentioning DIY monitoring scripts and tasks. Many DBAs enjoy writing their utilities, and some are quite good at it. But it comes with a price.

Many DIY (or roll-your-own) solutions cause eventual maintenance headaches. The problems arise when you don't remember who built the solution or the person who built it can't maintain it anymore. In many cases, the architect wasn't an expert in database or performance monitoring, which can result in glaring functionality shortcomings.

Monitoring can be a simple task or a complex and exhaustive process for full control over larger complex estates depending on your ambition and needs.

What you decide to implement will depend on the organization's requirements, your budget, and your skills.

If you do decide to invest in a 3rd party solution, there are many things to consider that we have highlighted here. Since you will usually live for a long time with an investment in a monitoring solution, you need to spend some time deciding what you need now ( and in the future as things grow and expand) before you jump in.

The cost of a monitoring solution can be considerable, but so can the benefits.

Look for capabilities for preventive operations, performance management, resource utilization, and, most importantly, much-improved productivity and DBA satisfaction.

+47 22 33 14 20

sales@dbwatch.com

dbWatch AS

Kongens Gate 15

0153 Oslo

Norway